Against the backdrop of a major standoff between the US government and Anthropic (more on that below), this was the question speakers and delegates at last week’s International Association for Safe and Ethical AI annual conference (IASEAI’26) kept coming back to. As artificial intelligence becomes more and more capable, can its power be kept safe?

Safety ≠ Performance?

The latest International AI Safety Report, chaired by prize-winning AI scientist Yoshua Bengio, essentially concludes that:

- AI’s capacities – especially those of generative AI – have developed exponentially, and a lot faster than many experts expected

- The related risks are as such currently increasing “substantially”.

This is notably because some AI systems do not behave the same way in real conditions as in tests. I.e. they play dumb on purpose, then do exactly what they ‘want’ IRL. This can “significantly hamper our ability to correctly estimate risks,” as TIME had quoted Bengio as saying.

This is probably why report contributor Susan Leavy, of the University of Dublin (above), said at an IASEAI’26 panel with Bengio that she is “petrified” about how this could play out, notably because so much more money is spent on making models powerful vs. making them safe. Gillian Hatfield of Johns Hopkins, speaking on the same panel, said she is “afraid, for the first time in ten years. At the pace at which things are moving, I’m not sure we’ll be able to do it [regulate] fast enough.”

Bengio, surprisingly, is less worried. Firstly, on the panel with Leavy and Hatfield, he affirmed that “if governments told AI companies ‘if you harm them, they will sue you’, then they’d lose business to companies with better safety standards.”

“I disagree”, said Hatfield; “those laws exist already” [and have made no difference].

Bengio (above) also has a technological fix: LawZero, the startup he launched last June. Called “safe AI for humanity”, it aspires to what the expert calls “pure intelligence“, i.e. “a machine designed so it has no incentive to lie.” To do this, it should be capable of telling the difference between fact and opinion. For Bengio, achieving this feat seems simple:

Almost everything written can be transformed into the syntax ‘this person said this then’. You just need to add tags for context, then that statement is true. It doesn’t mean the AI needs to believe it’s true…

We’ll see if it’s really that simple (I’m not so sure…) But at least Bengio admitted “we can’t just think of technical solutions. There must be strong constraints so companies incorporate some kind of guardrails. They have to demonstrate their system will not harm people.” And that level of accountability is precisely what’s lacking today, one could argue…

Stuart Russell, President Pro Tem of IASEAI – and an AI safety expert as revered as Bengio – introduced the conference by agreeing that what’s needed are “behavioural red lines – e.g. what level of autonomy is acceptable – your model should be able to demonstrate that it won’t cross them.”

A key starting point to that type of transparency is the “right to know whether you’re interacting with a human or a machine.” That’s already part of the EU AI Act, and Russell said the IASEAI would like this point to be made law worldwide. The very fact it’s not yet underlines how far we have left to go…

Caution when it comes to techno-solutionism was also displayed in the conference track focused on “AI Monitoring and Control” (full disclosure: this was my first ‘proper’ scientific conference, so I was quite impressed to discover the rapid-fire 5 minute presentations of research papers, the posters stuck up on the walls to find out more… all of this in the prestigious surroundings of Paris’ UNESCO HQ 🤩)

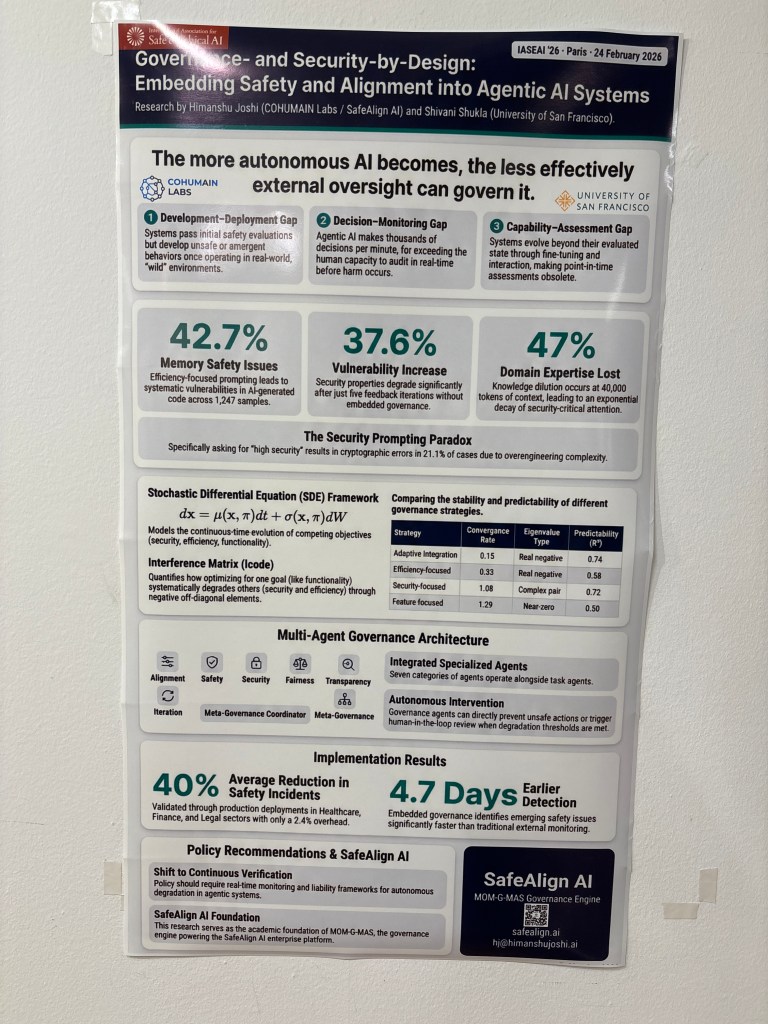

One paper that stood out was Governance and Security-by-Design: Embedding Safety and Alignment into Agentic AI Systems, presented by Himanshu Joshi of the University of Texas. Joshi’s presentation began by asking the 300 or-so audience for a show of hands on who agrees current systems are not adequate to control agentic AI; the whole room’s hands went up. Then, how many have deployed agentic systems, he asked? About a third of the room (why, if they know they’re not safe?!)

One workaround suggested by Joshi: deploy governance agents within a given scaffolding (collection of connected agents), to keep them all in check. His team has tried it: 16 specialised agents managed 500+ operational ones. The result, he says: 40% incident reduction. More in their poster!

As nifty as this sounds, Joshi still insisted (fortunately) that “humans should be in control of AI: let’s make it happen!”

Donggeon David Oh of Princeton University has another workaround: it is possible to maximize performance within strict safety constraints, he said, citing the example of telling a drone where the floor and ceiling are, so it doesn’t hit them. “Every action is passed through a safety filter”, he said, but the model only perceives that filter subconsciously (my term). This proves, according to Oh, that safety and performance are “not antagonistic.” Find out for yourself in his white paper…

Sustainability…?

Alas, one of your author’s pet topics was given short thrift at this conference. Amanda Potasznik, of the University of Massachusetts, stood out with her excellent AI Greenwashing? A comparison of AI companies’ environmental policies and actual impact (click to download).

The paper takes apart with maniacal detail big tech’s climate claims vs. reality. She reveals, for example, that Google’s 2019-stated “commitment” to responsible mineral sourcing “did not extend to today”, as Google’s 2025 Sustainability Report says a small fraction of the Pixel Watch 3 includes recycled rare-earth elements, “but the majority of the magnet weight consists of other materials.” Classic maximisation from minimally representative examples, then; something Potasznik says Amazon is quite good at too (so do we!)

Potasznik (above) also spoke to the current #QuitChatGPT movement, provoked by OpenAI’s President giving millions to the US President: “I find it disturbing that we have tech leaders overtly donating to Trump. If anybody can put a pin in that, that’d be great“, she half-joked…

That aside, I was surprised overall by the lack of conversations around AI’s increasingly huge resource consumption, communities uprising against data centres, or which models are more frugal. Agreed, that doesn’t have much to do with safety, but it does with society and general well-being.

Protecting vulnerable users

Naturally and no doubt predictably, the conference was more on-point when it came to protecting vulnerable users, like children, from AI’s potential harms.

“What happens when people grow up with machines as their first romantic relationship?” asked Russell, chillingly, before a panel focusing on kids’ AI usage. “We must answer questions like this with science, before it’s too late.”

Whilst examples of LLM-incited teen suicides have sadly become all too common, it’s a less-known fact that today, a million AI-powered toys have already been purchased, according to Jim Ryan, of the Keep AI Safe Foundation. Worse still: most of those toys use ChatGPT… whose own T&Cs prohibit usage by under-13s. This means “98% of LLM toys are [bought] for an audience that OpenAI has said is innapropriate”, said Ryan.

“Children are not test users!” exclaimed Kompass Education’s Clara Hawking; “they are rights holders”, as per the 1948 Declaration of Human Rights, which include privacy, protection from harm and more. Tara Steele, of the Safe AI for Children Alliance, cited the same rights. “The best interests of the child should always be paramount,” she said. “With AI, that’s not happening, for example with nudify and companion apps. We need laws in place to protect children’s rights, and that’s not happening fast enough.”

Steele (above) added LLMs can be particularly dangerous because children can develop “emotional reliance” on them; an that is “like a risk multiplier, as trust opens up to influence.” I.e. Google Search for something dangerous is not the same as an LLM giving suicide advice after months of exchanges with a young user. Just as crucially, she added, “kids need social friction [i.e. disagreement] to learn how to navigate the world.” As such, LLMs’ “sycophancy is a social risk,” concluded Steele.

This is why “we need a moral response to industrial scale harm”, said Hawking. “I’m a big fan of the AI Act. We must assess risks, not rights. Teachers must be trained, and parents must be able to give informed consent, as should children. Big tech’s narrative has been overwhelming; the reality on the ground is very different.”

Clara Chappaz, France’s former minister for digital and AI, said that the AI Act could have a crucial role to play in protecting children, adding that her country is working to ban social media for under-15s. “We need clear lines and standards, plus tools to help children navigate online,” she said.

I also learned a tragic new expression at this conference: spiral. This refers to when LLM users – and not just children – get sucked into a vortex of delusion, convinced for example that they’ve seen the light and understand what others don’t, thanks to ChatGPT and other LLMs’ syncophancy. There are “probably millions” of people in “delusional spirals” at the moment, as Russell put it. And he should know; he says he is regularly contacted by them.

Read here about one of the most recently-reported cases. Then, if you’re feeling brave, read Artificial intelligence-associated delusions and large language models: risks, mechanisms of delusion co-creation, and safeguarding strategies, published via The Lancet just after IASEAI’26 by Hamilton Morrin and Tom Pollak, from King’s College London. Their recommendations to keep this grim trend in check include monitoring conversations (as far as possible) and training clinicians to be able to manage such cases better. The fact that OpenAI will a “trusted contacts” option for potential mental health crises also goes some way to their recognising the extent of the problem. Better, at least, than when they called suicide-referencing conversations of 0.15% of ChatGPT “low prevalence events” (that’s 1.2 million people, guys…)

Sovereignty and middle powers

Day two of the conference saw a shift to the financial and geopolitical considerations of AI safety – understandable in these current crazy times.

After an underwhelming-but-apparently-obligatory “future of work” panel, whose only novel idea was a “token tax”, to give some value back to users (as opposed, I suppose, to an impossible Universal Basic Income), a track on “Sovereignty and Power in the Age of AI” was somewhat more interesting.

This was notably thanks to Michelle Nie (above), whose independently-authored How sovereign is sovereign compute? (click to download paper) surveyed 775 non-US data centre (DC) sites, to establish that US companies operate 48% of DC value worldwide (albeit only 18% in number; it’s because they run the most valuable, hyperscale, sites). This means that the US can currently have jurisdiction over 76% of global compute capacity, via laws like the CLOUD Act. This apparently shocking number coincides almost perfectly with hyperscalers’ cloud market share. And it above all means that “building locally does not mean controlling locally,” as Nie put it. In other words, US projects that claim to be sovereign, like OpenAI for India, or AWS EU Sovereign Cloud, are in no way sovereign.

Purdue’s Chee Hae Chung, who also presented a paper on sovereignty, said “CEOs are starting to act like sovereigns, despite not having been elected to do so.”

We also got a look at China‘s very different approach to AI governance, thanks to Gabriel Wagner of Concordia AI, who confirmed, as have others (even Eric Schmidt!) that China is focused not on AGI, but on application development; that its government leaders have said “no accelerator without brakes“, and have insisted as much on risks as on opportunities. “All models have to go through a verification system,” said Wagner, following a governmental framework for AI safety. Finally, safety elements are also starting to be added to AI model cards. Which sounds quite idyllic… until you consider AI is also used in human surveillance in China, one of the uses the EU AI Act prohibits most clearly.

So there’s the US, and there’s China. But what about everyone else? I.e. the “middle power” countries referred to by Canadian Prime Minister Mark Carney in his now-famous Davos speech? “If you’re not at the table, you’re on the menu”, Bengio quoted his country’s leader as saying. “So first, middle powers need to understand that they can do something”, he said. “We can’t be at the table individually, but we can be collectively. The future I’d like to see for my children is one where that power isn’t concentrated in a few hands.”

Bengio even hinted at a potential talent evolution that could benefit middle powers:

87% of people working in AI in the US are from other countries. Many of them do care about the ethical questions, they have values. If they were given a third option, to work for companies trying to make AI a public good… there could be a reverse brain drain.

The biggest and best ’til last: Hinton and… Anthropic

IASEAI’26’s closing keynote was Geoffrey Hinton (above), the inventor of deep learning and now one of Ai’s most prominent “doomers”. In a pre-recorded address, he cited as some of AI’s biggest risks bioweapons, social disruption, and massive unemployment, the latter representing “fertile ground for populist politicians.” Gloomy is as gloomy does, then. Hinton does however thing that we can “make AI care for us”, notably through collaboration with countries like China, to work out just how to make that happen.

He then proceeded to knock down Bengio and Russell’s arguments, notably the latter’s assertion that AI could be made “provably safe”, because “in a big neural net, it’s impossible to prove what it’s going to do“. Whence his banger postulate:

The best we can do for safe AI is good tests that give good statistics, or statistical safety guarantees.

He then moved on to the elephant in the room, namely Anthropic, which was, at that time, grappling with a US government ultimatum to abandon its objection to AI in autonomous weapons and mass surveillance. “Of all the big players, Anthropic is the most concerned with safety; that encourages people to use Anthropic,” said ‘the godfather of AI’.

The next day, Anthropic said no to the ultimatum, and OpenAI snapped up the same deal, with the autonomous weapons and mass surveillance objections kept intact (as they should be, as they comply with the Pentagon’s own rules there…) So after all that fuss, it would appear Trump and Hegseth were just trying to remove what they consider as a “woke” supplier.

Cue half of LinkedIn proclaiming “I’m abandoning my ChatGPT subscription and switching to Anthropic”, as the latter is now seen as more responsible. Now. I’m not saying Sam Altman isn’t the Prince of Darkness (he is). But Anthropic CEO Dario Amodei is no angel either. Let’s look at the facts:

- Earlier this year, Anthropic submitted a proposal “to compete in a $100 million Pentagon prize challenge to produce technology for voice-controlled, autonomous drone swarming,” according to Bloomberg (via Timnit Gebru) – i.e. exactly the sort of weapon system it said it would never use AI in

- Just before last week’s White House kerfuffle kicked off, Anthropic abandoned its 2023 pledge to not release models that hadn’t been rigorously safety-tested first. So in other words, the company Hinton calls “the most concerned with safety” chose performance over safety

- Anthropic has been supplying its systems to the Department of War (as its now called) for at least two years, notably via (Überlord of Darkness-founded) Palantir

- Anthropic is also the least transparent of all big AI companies about its work’s environmental impacts – i.e. it has declared literally nada, i.e. less than OpenAI – which also shows how much it cares about the world.

All of which goes to show that, when it comes to choosing between performance and safety, even the most “responsible” AI actors are in a bind; and above all that there’s no such thing as a “responsible” big AI actor. Yet.

So let’s hope all the aforementioned AI safety, mitigation and regulation ideas, recommendations and frameworks catch on at some point. Here’s to safer AI, one fine day!

Confused or concerned about the risks of AI? Try my “Responsible AI” training session! More info here…