Well, this should be a quick post! Why? Because despite having the word “Impact” in its title, the India AI Impact Summit only featured a handful of sessions on the elephant in the room – AI’s considerable environmental impacts – despite “Planet” being one of its three main pillars, or sutras.

As usual, we couldn’t count on the giants of AI to say anything useful on the topic. Not long after refusing to hold hands on stage with arch-rival Dario Amodei, OpenAI CEO Sam Altman said: “people talk about how much energy it takes to train an AI model… But it also takes a lot of energy to train a human. It takes like 20 years of life and all of the food you eat during that time before you get smart.”

Seriously, Sam?! This is the same BS argument as that infamous Nature white paper that was shot down late 2024, for a host of reasons, including its fully ignoring all of AI’s other impacts: water, hardware, air pollution and more.

Fortunately, one panel got things less wrong than the head of the world’s biggest AI model maker: “Smaller Footprint, Bigger Impact: Building Sustainable AI for the Future” (watch in full here). Organised by France’s ever-proactive Ministry for Ecology (big up Hélène Costa & team), it began with French digital minister Anne le Henanff affirming that “sustainable AI is not an option, it’s an imperative… resilient and sustainable AI must be the baseline.” Hurrah!

UNESCO’s Tawfik Jelassi agreed, stating that “the future of AI will not be defined by scale alone, but by resilience”. Whence the organisation, by the governments of France, India and UNESCO, of the Resilient AI Challenge, which will “demonstrate how open source models can be deployed to achieve strong performance whilst significantly reducing energy use”, said Jelassi. Winners of the challenge will be announced at the next AI for Good summit, in July.

There then ensued a panel which – to get straight to the point – essentially agreed that sustainable AI is good for business. French AI star Arthur Mensch, CEO of Mistral AI (above centre), put it best:

The good thing about AI is that we’re energy constrained, so efficiency is driven by business. So transparency is very important for our customers – we did a very deep study of that, the carbon intensity of our training, with 3rd party auditors etc – but business is also the reason we’re going to[wards] more efficient models. Because we don’t have enough energy, we need things that run on smaller hardware. And it depends on the country – e.g. in the US, the constraints [are] higher than in Europe, and [are] going to be higher in Africa and India too.

So it’s always good when business aligns with climate. I think it would be good for public procurement in particular to put more pressure [emphasis] on sustainability as a way to accelerate the industry, as that raises the stakes, and also pushes us towards more efficiency.

Now this is a great quote, for all sorts of reasons. Let’s first just point out its one limitation: the “deep study” that Mensch refers to was indeed the most detailed ever provided by an AI model maker. However, “carbon intensity” (of electricity) was the one thing it did not provide, as it gave no indications about energy whatsoever 🤔

That aside, business imperatives pushing the industry towards smaller models is a great concept, especially as US AI leaders are doing the opposite in their unending race towards AGI. Smaller models, of course, make even more sense in “constrained” markets, like India. Indeed, Abishek Singh (above right), lead organiser of the IA Impact Summit said:

It’s fashionable to go for trillion parameter models, but for specific usages, you’ll need smaller models. In India, we’re not chasing the parameter game or AGI, rather what models can be used for real impact. And when we do that, the cost per query becomes [a] real [priority].

Singh then referred to the highly practical example of using (probably non-generative) AI to improve grid efficiency; something he said has already been leveraged in India, to “bring down energy losses by 10-15%. Business sense will ensure that sustainability comes in“, he optimistically affirmed.

“Noone really talks about model size“, observed James Manyika, SVP at Google-Alphabet, the only other AI model-maker on the panel. After insisting on the US giant’s willingness to provide a full range of 20+ sizes of its (smallest) Gemma models, “especially in India”, he affirmed that Google wants to be “carbon free” in energy terms “by around 2035“… thereby admitting his company’s previous objective has slipped by five years. Oopsie!

Kenya’s tech ambassador Philip Thigo notably reminded us of the importance of sovereignty in the global AI equation. Not only is his country “one of world’s most energy-constrained”, according to moderator Anne Bouverot (the head of Paris 2025 AI Action Summit, above left); “governments need to be aware of what parts of the AI stack are in their country”, said Thigo. He also insisted on expanding the notion of AI safety, including environmental; and on investing in standards, so we can learn about AI’s “environmental footprint from case studies”.

Singh seemed to agree, citing (as yet to become a reality) small nuclear reactors, or SMRs, as a way to take load off the grid:

As AI adoption goes up, energy costs go up, for an elected government that’s not great. We need to balance the boat… We can’t solve one problem and have [create] another.

So now its down to our panelists to ensure, as Bouverot put it, that “AI’s impact is not an afterthought, it’s front and centre”. Apt timing considering the latest consultation of France’s AI Council, that she leads, put the environment as citizen’s second biggest concern about AI. Dont acte, to put it in Molière’s language… (i.e. “so let’s get on with it”) 🙂

Dr. Niladri Choudhuri, President of India’s Green Computing Foundation, agreed that “sustainable AI conversation was largely absent” from the Summit. Except notably at the “From Buzzword to Blueprint” panel, which Choudhuri resumes on LinkedIn, here. The session covered 6 themes, notably the as-yet under-explored potential for CPU-powered enterprise inference workloads, “with 2-3x efficiency gains and 60-80% energy reductions”. CPU inference is something we and others long pushed for, so it’s good to see it still being explored, especially in resource-strapped territories like India. “These are not theoretical propositions, but proven strategies delivering superior performance while dramatically reducing environmental impact and operational costs,” writes Choudhuri.

The Coalition for Sustainable AI, a grouping of 100+ companies looking to build an alternative to the US’ “bigger is better” approach, also took advantage of the Summit to release the updated version of its “Global Approach of Standardization for AI Environmental Sustainability“, which essentially draws together all global standards which can be used towards the goal of making AI more sustainable (above and here). Pretty, it is not: useful, it very much should be!

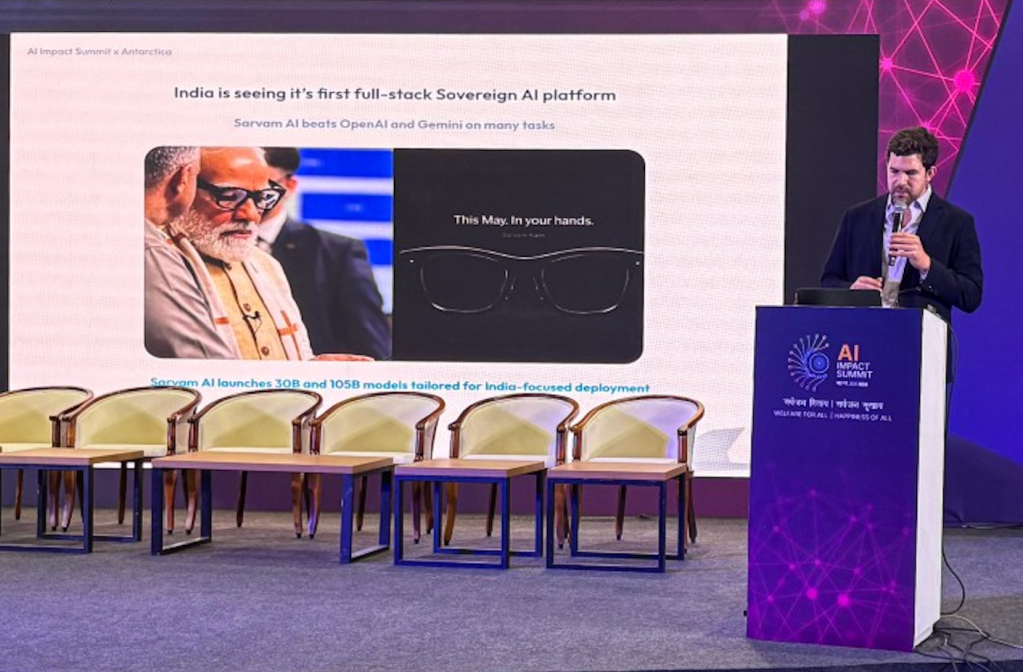

Elsewhere at the Summit, the only other sustainable AI conference we were aware of (I sure hope we are wrong!) was from Antarctica, an India-based startup run by French entrepreneur Mathieu François (above). Antartica’s mission is “to reduce the environmental impact of IT systems and leverage technology to design software solutions that… contribute to shaping a climate-positive future”.

Considering 80-90% of enterprise AI energy costs come from inference, Antarctica’s observability dashboards give companies granular, real-time visibility of AI activity and impacts (separately from other IT workflows) including where the work is being done, the power and water efficiency of those data centres, and what hardware/GPUs are doing what in terms of workloads. This way, affirms François, “AI infrastructure decisions can be linked to business demand“.

Antarctica uses its own methodology, called the One Token Model, the fruit of three years’ research, according to François. Aligned with methods such as Ecologits, it assesses impacts at the model, hardware and inference levels.

Given the biggest AI providers’ infamous and ongoing opacity when it comes to impact measurement, let’s say we need all the help we can get!

Especially if private and public sector leaders agree sustainable AI is good for business…

Excellent further reading via Nathaniel Burola: a full list of sustainability sessions (more than I thought) and resources from the Summit. Food for thought. But an AI+climate pledge would’ve been a great way to end the Summit, no? 🙄